Transforming Cloud-Native Financial Services: Key Approaches

Accelerate fintech innovation with cloud-native solutions. Discover the benefits, scalability, and cost optimization for seamless...

This article discusses the benefits of using serverless computing on Kubernetes, specifically Google's Cloud Run platform, which allows developers to package existing code in Docker containers for on-demand, pay-per-use deployment. The article also explores the use of Amazon S3 for video streaming, including making videos publicly available and restricting access through token-based authentication.

The article covers the advantages of serverless computing on Kubernetes, the features of Google's Cloud Run platform, and the use of Amazon S3 for video streaming, including token-based authentication for restricting access to subscription-based content

Kubernetes is acquiring a great deal of popularity and is being embraced by all organisations, regardless of their size. Now, adopting Kubernetes requires skilled developers to be hired. This might seem like an overhead for organisations, especially for bootstrapped start-ups and small-scale ones. Let’s see how this issue can be tackled.

Serverless computing has been a hot topic of discussion for some time, and it is not going away anytime soon. When developers are freed from the responsibility of managing infrastructure, they are better able to concentrate on developing and improving the product, which speeds up the process of bringing it to market.

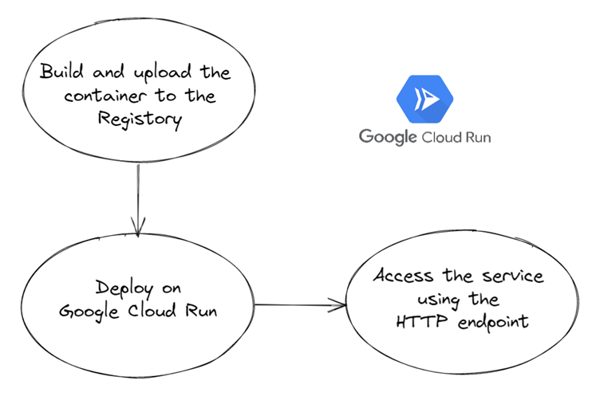

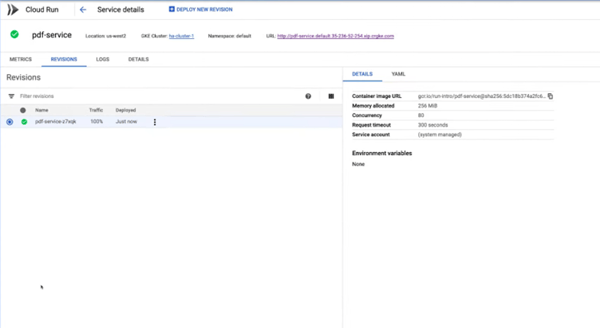

Cloud Run is a serverless platform that is driven by Knative, a runtime environment that extends Kubernetes for serverless applications, and the Functions Framework. Cloud Run was developed by Google. In contrast to other serverless services, which need us to deliver code that was specifically created to operate as a function and be activated by events, Cloud Run gives us the ability to package our already-existing code in a Docker container.

This container can function in the fully managed serverless environment of Cloud Run, but because it uses Knative, it can also run on Google Kubernetes Engine. This enables us to add pay-per-use, on-demand code to your existing Kubernetes clusters. Cloud Run is a fully managed environment for serverless computing. It might not necessarily be a full-blown solution, but it does make serverless applications on Kubernetes a reality.

Knative provides an open application programming interface (API) as well as a runtime environment. This allows us to execute your serverless workloads anywhere we wish, including totally managed on Google Cloud, on Anthos on Google Kubernetes Engine (GKE), or on our very own Kubernetes cluster. Knative makes it simple to get started with Cloud Run, then transfer to Cloud Run for Anthos, or to start with our own Kubernetes cluster and then migrate to Cloud Run. Both of these options are available to us. Because we are utilising Knative as the foundation upon which everything else is built, we are able to transfer workloads between platforms without having to pay significant switching expenses.

- Rapid autoscaling

- Split traffic

- Automatic redundance

- No vendor lock-in

Cloud Run needs the code to be a stateless HTTP container that launches an HTTP server within four minutes or two hundred and forty seconds of receiving a request, responds to the request within the request timeout, and is built for 64-bit Linux. The port that listens for requests needs to be specified as 8080, but the port itself should not be hard coded.

Note: Every Cloud Run service possesses a stable HTTPS endpoint by default, with TLS termination handled for us.

- Websites

- REST API backend

- Lightweight data transformation

- Scheduled document generation, such as PDF generation

- Workflow with webhooks

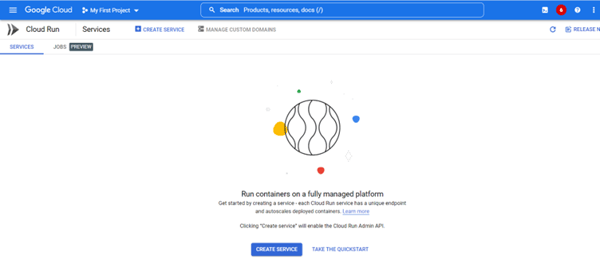

Before getting started, make sure the appropriate Google Cloud Project is activated and billing is enabled for the Google Cloud Project.

There are multiple ways to deploy to Cloud Run:

- Deploying a pre-built container

- Building and deploying a container from source code

We can create both services and jobs using Cloud Run.

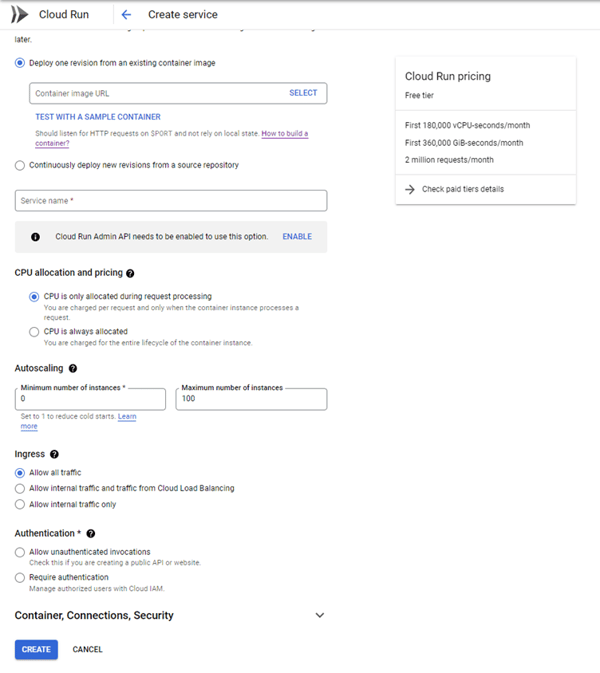

- Select Deploy one revision from an existing container image.

- We can either provide our container image URL or click Test with a sample container.

- In the Region pulldown menu, we need to select the region where we want the service to be located.

- Under Authentication, we will select Allow unauthenticated invocations. We can modify permissions as per our use case.

- Finally, click Create to deploy the container image to Cloud Run and wait for the deployment to finish.

Note: We can configure CPU allocation and autoscaling as per need. We can also specify the security settings along with referencing secrets.

While Kubernetes may appear cumbersome, serverless technologies such as Cloud Run might be utilised to streamline and speed up the process of developing applications.

Accelerate fintech innovation with cloud-native solutions. Discover the benefits, scalability, and cost optimization for seamless...

Discover the importance of keyword research tools for successful SEO strategies. Explore criteria for selecting free tools and the role of...

Unleash your creativity with DALL-E 2! Discover expert tips and strategies to maximize your AI-generated image results and revolutionize...